TensorFlow学习笔记8:AlexNet

AlexNet

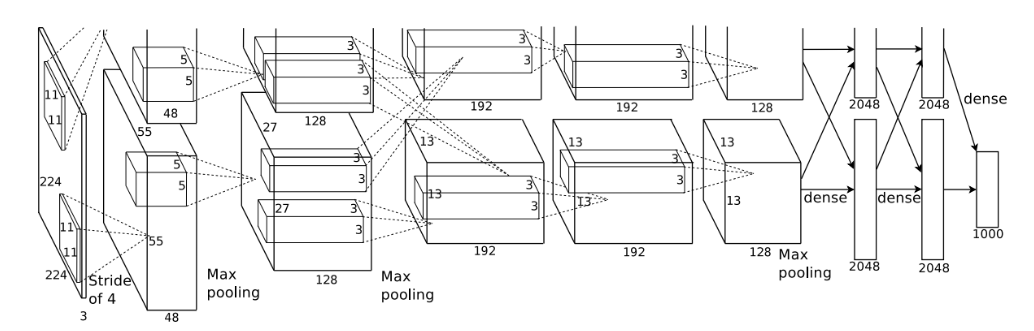

AlexNet是Hinton的学生Alex Krizhevsky在2012年提出的深度卷积神经网络,它是LeNet一种更深更宽的版本。在AlexNet上首次应用了几个trick,ReLU、Dropout和LRN。AlexNet包含了6亿3000万个连接,6000万个参数和65万个神经元,有5个卷积层,3个全连接层。在ILSVRC 2012比赛中,AlexNet以top-5的错误率为16.4%的显著优势夺得冠军,第二名的成绩是26.2%。AlexNet的trick主要包括:

- 成功使用RELU作为CNN的激活函数,并验证其效果在较深的网络中的效果超过了sigmoid,解决了sigmoid在深层的网络中的梯度弥散的问题。

- 使用Dropout来随机使得一部分神经元失活,来避免模型的过拟合,在AlexNet中,dropout主要应用在全连接层。

- 使用重叠的最大池化,以前在卷积神经网络中大部分都采用平均池化,在AlexNet中都是使用最大池化,最大池化可以避免平均池化的模糊化效果。重叠的最大池化是指卷积核的尺寸要大于步长,这样池化层的输出之间会有重叠和覆盖,提升特征的丰富性。在AlexNet中使用的卷积核大小为3×3,横向和纵向的步长都为2。

- 使用LRN层,对局部神经元的活动创建有竞争机制,让响应较大的值变得相对更大,并抑制反馈较小的神经元,来增强模型的泛化能力。

- 使用了CUDA来加速深度神经网络的训练。

- 数据增强,随机从256×256的原始图像中截取224×224的图像以及随机翻转。如果没有数据增强,在参数众多的情况下,卷积神经网络会陷入到过拟合中,使用数据增强可以减缓过拟合,提升泛化能力。进行预测的时候,提取图片的四个角加中间位置,并进行左右翻转,一共10张图片,对它们进行预测并取10次结果的平均值。在AlexNet论文中也提到了,对图像的RGB数据进行PCA处理,并做一个标准差为0.1的高斯扰动,增加一些噪声,可以降低1%的错误率。

网络结构

TensorFlow实现

第一层卷积层

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20with tf.name_scope("conv1") as scope:

#设置卷积核11×11,3通道,64个卷积核

kernel1 = tf.Variable(tf.truncated_normal([11,11,3,64],mean=0,stddev=0.1,

dtype=tf.float32),name="weights")

#卷积,卷积的横向步长和竖向补偿都为4

conv = tf.nn.conv2d(images,kernel1,[1,4,4,1],padding="SAME")

#初始化偏置

biases = tf.Variable(tf.constant(0,shape=[64],dtype=tf.float32),trainable=True,name="biases")

bias = tf.nn.bias_add(conv,biases)

#RELU激活函数

conv1 = tf.nn.relu(bias,name=scope)

#输出该层的信息

print_tensor_info(conv1)

#统计参数

parameters += [kernel1,biases]

#lrn处理

lrn1 = tf.nn.lrn(conv1,4,bias=1,alpha=1e-3/9,beta=0.75,name="lrn1")

#最大池化

pool1 = tf.nn.max_pool(lrn1,ksize=[1,3,3,1],strides=[1,2,2,1],padding="VALID",name="pool1")

print_tensor_info(pool1)第二层卷积层

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18with tf.name_scope("conv2") as scope:

#初始化权重

kernel2 = tf.Variable(tf.truncated_normal([5,5,64,192],dtype=tf.float32,stddev=0.1)

,name="weights")

conv = tf.nn.conv2d(pool1,kernel2,[1,1,1,1],padding="SAME")

#初始化偏置

biases = tf.Variable(tf.constant(0,dtype=tf.float32,shape=[192])

,trainable=True,name="biases")

bias = tf.nn.bias_add(conv,biases)

#RELU激活

conv2 = tf.nn.relu(bias,name=scope)

print_tensor_info(conv2)

parameters += [kernel2,biases]

#LRN

lrn2 = tf.nn.lrn(conv2,4,1.0,alpha=1e-3/9,beta=0.75,name="lrn2")

#最大池化

pool2 = tf.nn.max_pool(lrn2,[1,3,3,1],[1,2,2,1],padding="VALID",name="pool2")

print_tensor_info(pool2)第三层卷积层

1

2

3

4

5

6

7

8

9

10

11with tf.name_scope("conv3") as scope:

#初始化权重

kernel3 = tf.Variable(tf.truncated_normal([3,3,192,384],dtype=tf.float32,stddev=0.1)

,name="weights")

conv = tf.nn.conv2d(pool2,kernel3,strides=[1,1,1,1],padding="SAME")

biases = tf.Variable(tf.constant(0.0,shape=[384],dtype=tf.float32),trainable=True,name="biases")

bias = tf.nn.bias_add(conv,biases)

#RELU激活层

conv3 = tf.nn.relu(bias,name=scope)

parameters += [kernel3,biases]

print_tensor_info(conv3)第四层卷积层

1

2

3

4

5

6

7

8

9

10

11

12with tf.name_scope("conv4") as scope:

#初始化权重

kernel4 = tf.Variable(tf.truncated_normal([3,3,384,256],stddev=0.1,dtype=tf.float32),

name="weights")

#卷积

conv = tf.nn.conv2d(conv3,kernel4,strides=[1,1,1,1],padding="SAME")

biases = tf.Variable(tf.constant(0.0,dtype=tf.float32,shape=[256]),trainable=True,name="biases")

bias = tf.nn.bias_add(conv,biases)

#RELU激活

conv4 = tf.nn.relu(bias,name=scope)

parameters += [kernel4,biases]

print_tensor_info(conv4)第五层卷积层

1

2

3

4

5

6

7

8

9

10

11

12

13with tf.name_scope("conv5") as scope:

#初始化权重

kernel5 = tf.Variable(tf.truncated_normal([3,3,256,256],stddev=0.1,dtype=tf.float32),

name="weights")

conv = tf.nn.conv2d(conv4,kernel5,strides=[1,1,1,1],padding="SAME")

biases = tf.Variable(tf.constant(0.0,dtype=tf.float32,shape=[256]),name="biases")

bias = tf.nn.bias_add(conv,biases)

#REUL激活层

conv5 = tf.nn.relu(bias)

parameters += [kernel5,bias]

#最大池化

pool5 = tf.nn.max_pool(conv5,[1,3,3,1],[1,2,2,1],padding="VALID",name="pool5")

print_tensor_info(pool5)最后三层全连接层

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18#第六层全连接层

pool5 = tf.reshape(pool5,(-1,6*6*256))

weight6 = tf.Variable(tf.truncated_normal([6*6*256,4096],stddev=0.1,dtype=tf.float32),

name="weight6")

ful_bias1 = tf.Variable(tf.constant(0.0,dtype=tf.float32,shape=[4096]),name="ful_bias1")

ful_con1 = tf.nn.relu(tf.add(tf.matmul(pool5,weight6),ful_bias1))

#第七层第二层全连接层

weight7 = tf.Variable(tf.truncated_normal([4096,4096],stddev=0.1,dtype=tf.float32),

name="weight7")

ful_bias2 = tf.Variable(tf.constant(0.0,dtype=tf.float32,shape=[4096]),name="ful_bias2")

ful_con2 = tf.nn.relu(tf.add(tf.matmul(ful_con1,weight7),ful_bias2))

#

#第八层第三层全连接层

weight8 = tf.Variable(tf.truncated_normal([4096,1000],stddev=0.1,dtype=tf.float32),

name="weight8")

ful_bias3 = tf.Variable(tf.constant(0.0,dtype=tf.float32,shape=[1000]),name="ful_bias3")

ful_con3 = tf.nn.relu(tf.add(tf.matmul(ful_con2,weight8),ful_bias3))softmax层

1

2

3weight9 = tf.Variable(tf.truncated_normal([1000,10],stddev=0.1),dtype=tf.float32,name="weight9")

bias9 = tf.Variable(tf.constant(0.0,shape=[10]),dtype=tf.float32,name="bias9")

output_softmax = tf.nn.softmax(tf.matmul(ful_con3,weight9)+bias9)评估模型性能

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20def time_tensorflow_run(session,target,info_string):

#前10次迭代不计入时间消耗

num_step_burn_in = 10

total_duration = 0.0

total_duration_squared = 0.0

for i in range(num_bathes + num_step_burn_in):

start_time = time.time()

_ = session.run(target)

duration = time.time() - start_time

if i >= num_step_burn_in:

if not i % 10 :

print("%s:step %d,duration=%.3f"%(datetime.now(),i-num_step_burn_in,duration))

total_duration += duration

total_duration_squared += duration * duration

#计算消耗时间的平均差

mn = total_duration / num_bathes

#计算消耗时间的标准差

vr = total_duration_squared / num_bathes - mn * mn

std = math.sqrt(vr)

print("%s:%s across %d steps,%.3f +/- %.3f sec / batch"%(datetime.now(),info_string,num_bathes,mn,std))

具体代码

1 | from datetime import datetime |